While the IT world is constantly changing and evolving, every once in a while, a technology comes along that is capable of disrupting the industry. If we take a moment to look back at the industry disrupting technologies of the last 20 years, server virtualization and cloud computing lead the way. In a similar fashion, containers and container management (particularly Docker and Kubernetes) have the potential to completely transform the way we currently view virtualization. The purpose of this blog is not to provide an in-depth look at containers or container management, but to give a brief comparison of containers and server virtualization, where we are today, and their role in the data center.

Related: Ditching the Hardware: An Introduction to Serverless Computing

Introduction to Containers

The concept of containers has been around for a number of years. For example, we can take a look at technologies such as chroots, jails, VPARS, Solaris Zones, etc. they are all examples of containerization. However, the technology that most resembles containers as we know them today is Linux LXC. LXC solidified the concept on containerization, and it was taken to the next level by a company called DotCloud and an internal (home grown) application packaging and distribution system named Docker. While DotCloud as a company no longer exists, it morphed into what became Docker, Inc. Today, Docker is the de-facto containerization technology and distribution platform.

Summary of Server Virtualization

In order to fully understand what containers are and their benefits, it is helpful to provide a general summary of virtualization. While there are various types of virtualization (server, storage, network, etc.) the focus of this blog is primarily server virtualization.

Server virtualization has been around for a long time, so I don’t think there’s any need to break down the benefits, which are now widely known. However, it is important to provide a brief summary of how server virtualization works in order to understand the similarities and differences when compared to containerization.

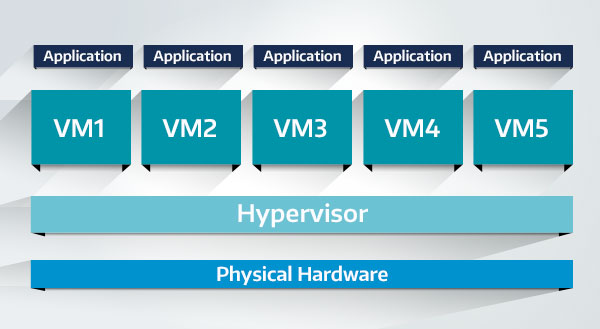

At a high level, how server virtualization works is easy to understand. In a nutshell, you have some physical servers which are converted to virtualization hosts (hypervisors) which in turn allow you to create virtual servers (VMs). If we take a deeper look at server virtualization, the infrastructure can be depicted as follows:

This server virtualization architecture model has changed little since its inception in the late 1990s and early 2000s. While more and more high availability features and management tools have been added, the underlying architecture of server virtualization has remained unchanged.

Server Virtualization—Hardware Virtualization

When we talk about server virtualization (i.e. VMware, Hyper-V, KVM, etc.) we are really talking about hardware virtualization. As can be seen in Figure 1, a hypervisor provides an abstraction layer to the physical hardware. The hypervisor in turn provides “virtual hardware” to the virtual machines. Therefore, with server virtualization we are not virtualizing the server itself (i.e., the OS and applications being served by the hardware), but in reality, we are simply virtualizing the hardware. This means that many of the headaches associated with running applications on physical servers are carried over into the virtual world. Even after server virtualization, management tasks typically associated with physical servers remain (drivers, multiple operating systems to manage/patch, library incompatibilities, boot devices, etc.)

Containers—A Better Approach to Virtualization

Containers provide a new approach to virtualization by completely separating the applications from the hardware. This makes containers highly portable, scalable, and more manageable.

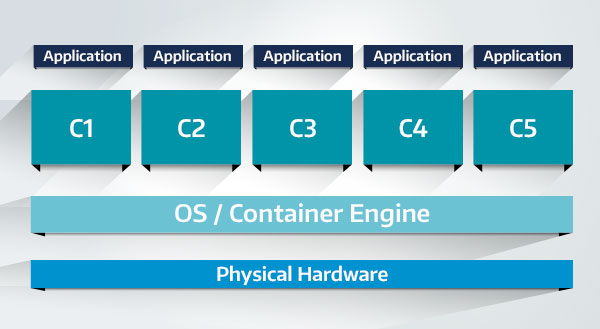

This separation of the application from the hardware is one of the major driving factors behind the rapid adoption of the cloud. At a high-level, containerization resembles the following diagram:

The diagram above may resemble that of the server virtualization diagram; however, there is only one operating system to manage. Each container (C1–C5) shares the same kernel as the OS running on the physical hardware. This means that even if there are 100 containers running on one server, there is always just one OS to manage, patch, etc. There are no drivers to worry about or even boot devices for the containers. While a VM may take minutes to boot, a container boots instantly.

Containers are all about the application, and their portability allows organizations to seamlessly migrate workloads between (to/from) all available platforms (VMware, Hyper-V, KVM, cloud, hybrid cloud, etc.).

Where We Are today

There has been a very rapid adoption of Docker and containers since they arrived in 2015. The level of adoption is far outpacing the early adoption of server virtualization technologies such as VMware and Hyper-V. Containers are currently facing the same backlash that virtual machines faced in the dawn of server virtualization. Some of the same early misconceptions of virtual machines are currently being applied to containers.

There is one major differentiator between the early adoption of virtual machines and containers: they are being welcomed by some of the largest IT companies. Companies such as Google abandoned traditional server virtualization years ago. Google is primarily driven by containers and Kubernetes. Even companies which are traditionally not open to change, such as Microsoft, have embraced containers. Not only have they included native support of Docker and Windows Containers in Windows 2016, but they are also strategic partners with Docker. Another example is Oracle, as they now allow Oracle 12c to run as a container.

Containers in the Data Center

Organizations (especially those with a cloud strategy) will continue to migrate workloads from traditional virtual machines to containers. This will give them the ability to not only increase portability and elasticity, but also reduce overall expenditures. Containers provide a much higher consolidation ratio than virtual machines, thus providing a much higher resource utilization percentage. In fact, some studies indicate double-digit resource utilization savings vs. traditional server virtualization.

The new data center virtualization trend will be to run containers side-by-side with virtual machines. For example, a data center currently running 100 VMs may be able to reduce the number of VMs to, let’s say, 50 VMs and 50 containerized applications (i.e., running on top of VMs). This reduces the number of operating systems to manage, patch, etc. by almost 50%. It also reduces overall memory and disk space consumption. As containers continue to gain acceptance (and application vendor support), data centers will continue to reduce their need for traditional VMs.

Virtual machines are not going to disappear overnight from the data center, but as acceptance of the cloud continues to grow, as well as the demand for highly scalable applications, VMs will become less and less attractive. Containers allow for highly scalable applications based on microservices. The next generation of applications will move away from the monolithic model and adopt the microservices concept. Instead of having a single monolithic application, the next generation of applications will be made up of multiple containers, each providing a separate role/service. This not only increases portability but also elasticity. When we hear the phrase “born in the cloud” applications, it typically refers to containerized applications.

Containers Are Our Future

Containers are here to stay, and will continue to take market share from the traditional virtualization technologies. It is important for us to understand containers, their impact, and how we can best capitalize on them. We may not “see” containers being used, but the truth is that we use them every day. Every time we use any of Google’s offerings, we’re using containers in the back-end. Every time we watch a show or movie on Netflix, we’re using containers. Hulu’s new live-TV offering is driven by containers. For these and many other organizations, traditional VMs simply could not keep up with user demand. Traditional VMs are great at some things, but they fall short when it comes to portability, scalability, and elasticity. As traditional (monolithic) applications give way to new applications built on microservices, the demand for traditional server virtualization will continue to decline.